Introduction

There are more than 180 zettabytes of data on the internet today. Going through it manually to find and verify useful information is not realistic. Engineering teams and research units need programmatic access to work with data at this scale.

A web search tool running on an application programming interface (API) solves this problem. It feeds live web information into your software systems.

Today, engineers and analysts rely on these endpoints to support artificial intelligence (AI), automate deep research, and monitor global market movements without any human input.

What is Web Search API?

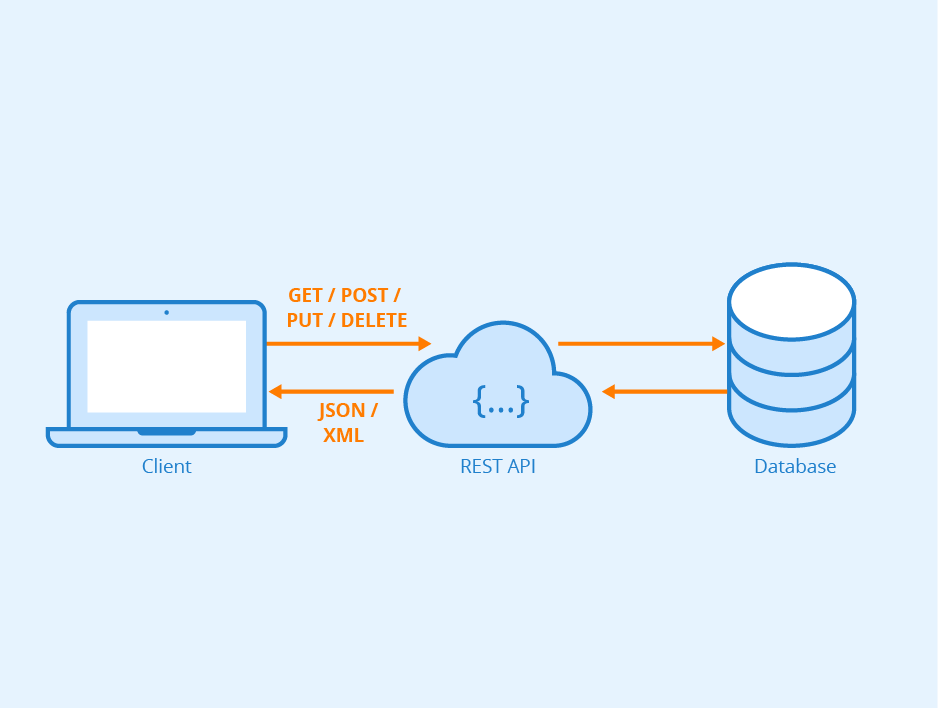

A web search API is a programmatic interface that lets applications query the internet and pull structured search results.

You write code to send a specific search query to a server, and that server returns the exact data you requested into your codebase.

Unlike traditional search engines like Google or Bing, which display blue links and advertisements, and are formatted for human readability in web browsers,an API strips away the graphical user interface. It focuses entirely on delivering raw, machine-readable data.

When you perform a manual search, you type a query, scan the page, click a link, and copy the text into a spreadsheet.

An API search bypasses the browser by sending an HTTP request directly from a server, allowing for the instant ingestion of the response into a database or application logic.

How Does a Web Search API Work?

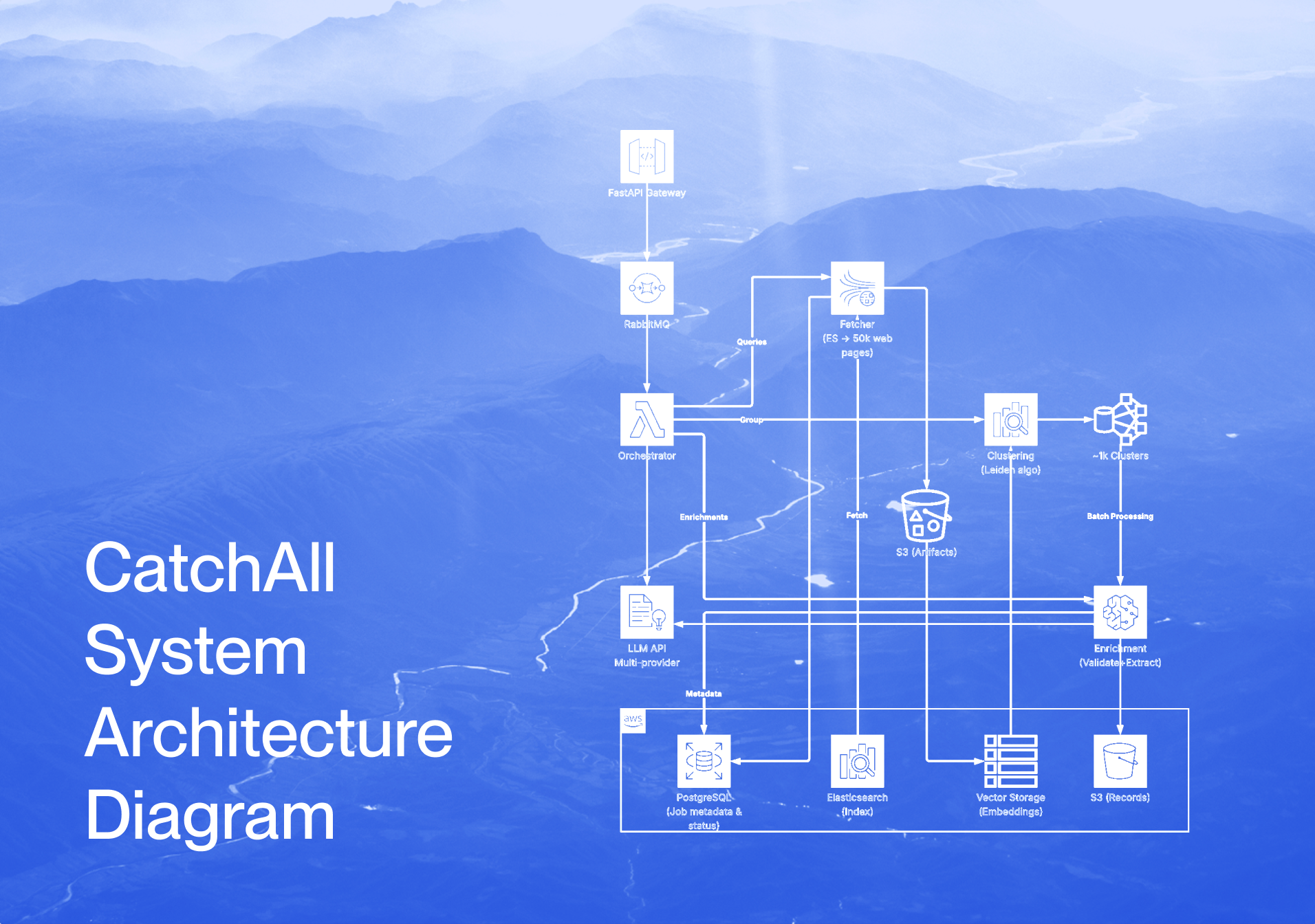

A web search API works by automating the collection of internet data through request processing, index searching, and the delivery of structured results. The process starts with a query to the API endpoint and ends with the return of curated web content.

The end-to-end flow involves four key stages:

- Request: Your application sends an HTTP GET or POST request that specifies parameters such as keywords, dates, and language settings.

- Processing: Upon receiving the query, the API backend scans its existing data or activates live crawlers to find and filter relevant information.

- Response: The relevant data is bundled into a machine-readable format and sent back over a secure link.

- Integration: Your application parses the data and feeds it to a database, a visualization dashboard, or an LLM context window.

Efficient APIs deliver structured data (JSON) rather than raw HTML. While HTML scraping is prone to breaking when site layouts change, JSON offers consistent key-value pairs like publish_date and fulltext that developers can map to their data models.

Which data sources do web search APIs use?

Web search APIs get information from a wide variety of platforms, including global news outlets, niche blogs, academic repositories, and social discussion forums.

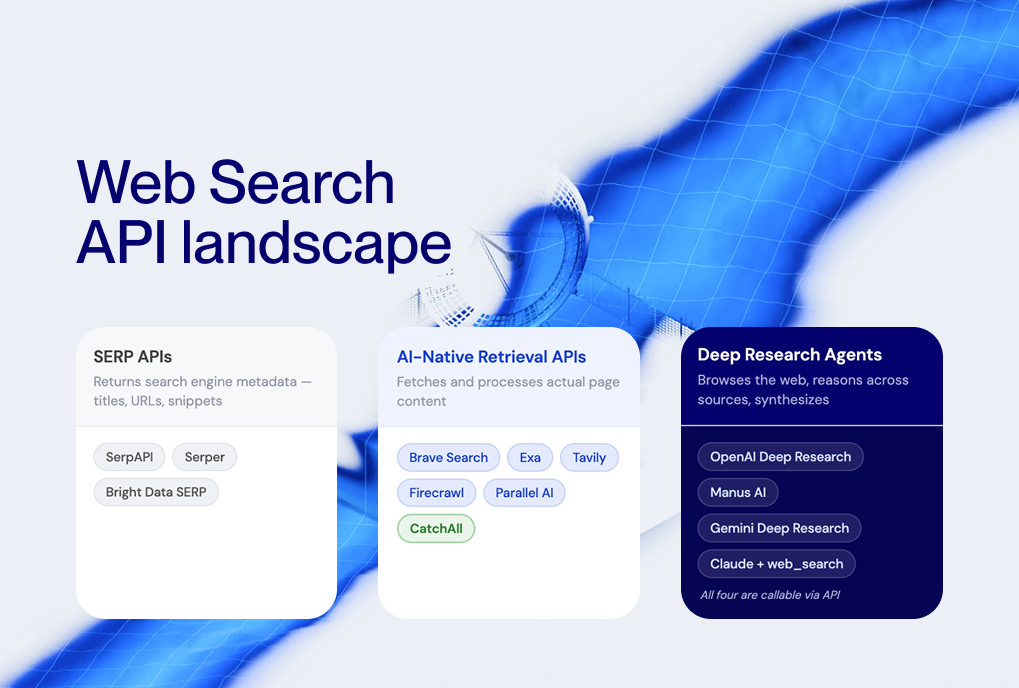

Some APIs act as wrappers for search engines like Bing, while others, such as NewsCatcher’s CatchAll, depend on proprietary, independent indices. It allows them to better support time-sensitive needs like real-world news.

For accuracy and freshness, these indices are continuously updated by high-performance systems that index upwards of 2 billion pages daily.

How do search APIs support automation and data pipelines?

Search APIs support automation through a live intelligence layer within a data pipeline that allows systems to make autonomous decisions based on real-time internet signals.

In a standard pipeline, the web search API provides the raw input. Next, AI cleans, analyzes, and uses it to trigger specific business actions, like sending a sales alert or updating a financial model.

What Are the Key Features of Modern Web Search APIs?

Modern web search APIs deliver high-fidelity data at an industrial scale. Developers and analysts must evaluate these core technical features to choose the right tool.

- Real-time data access: APIs provide low-latency connectivity to the live web, allowing developers to build applications that instantly reflect events. That immediate access allows analysts and businesses to respond to market shifts in minutes. For instance, hedge funds can integrate breaking news directly into automated trading algorithms to maintain a competitive edge.

- Structured data output: APIs replace fragile HTML parsing and regex scraping with reliable data pipelines by returning results in JSON. It keeps high accuracy for analysts, who can pipe structured results directly into BI tools like Tableau without manual cleaning.

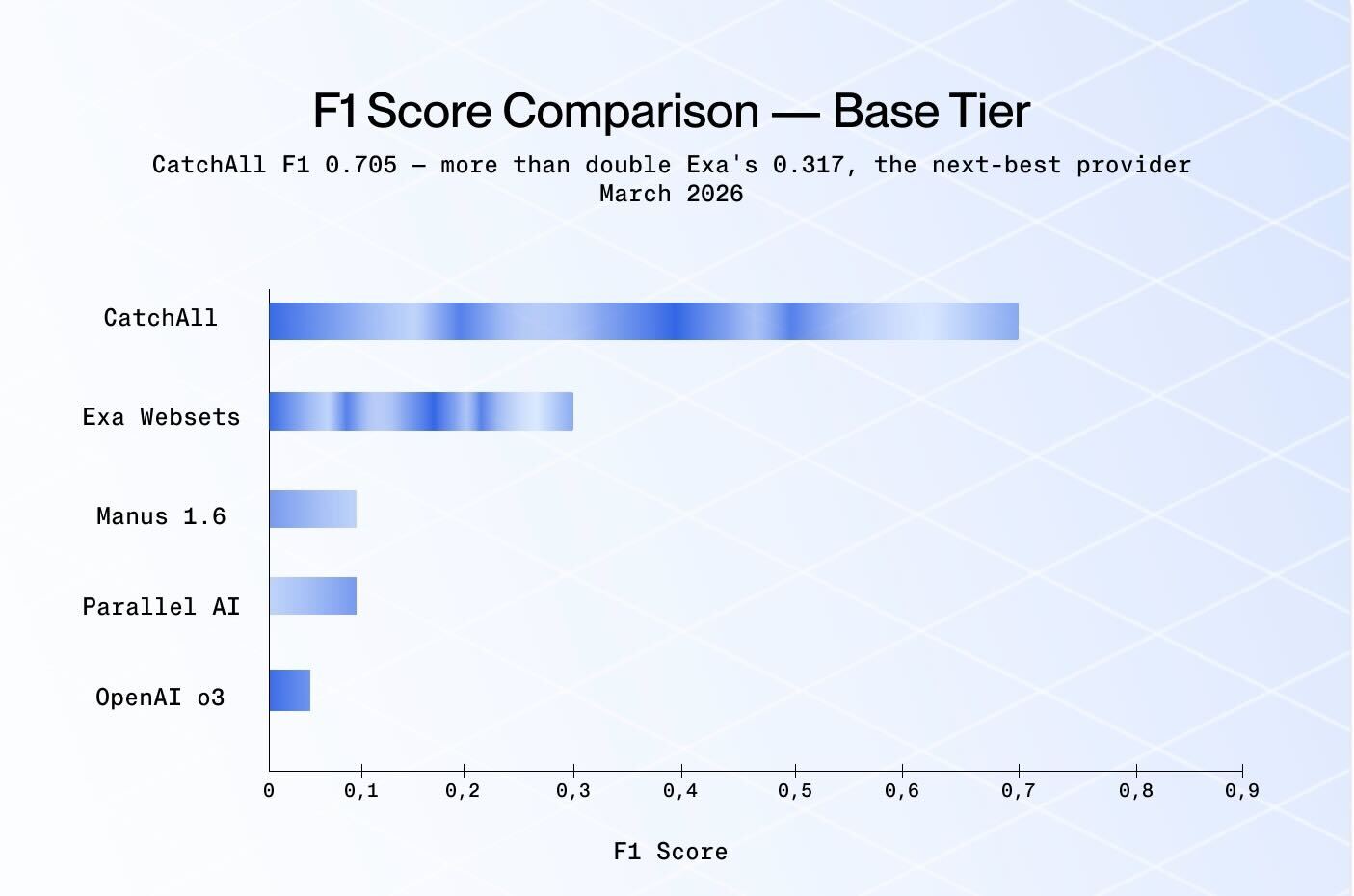

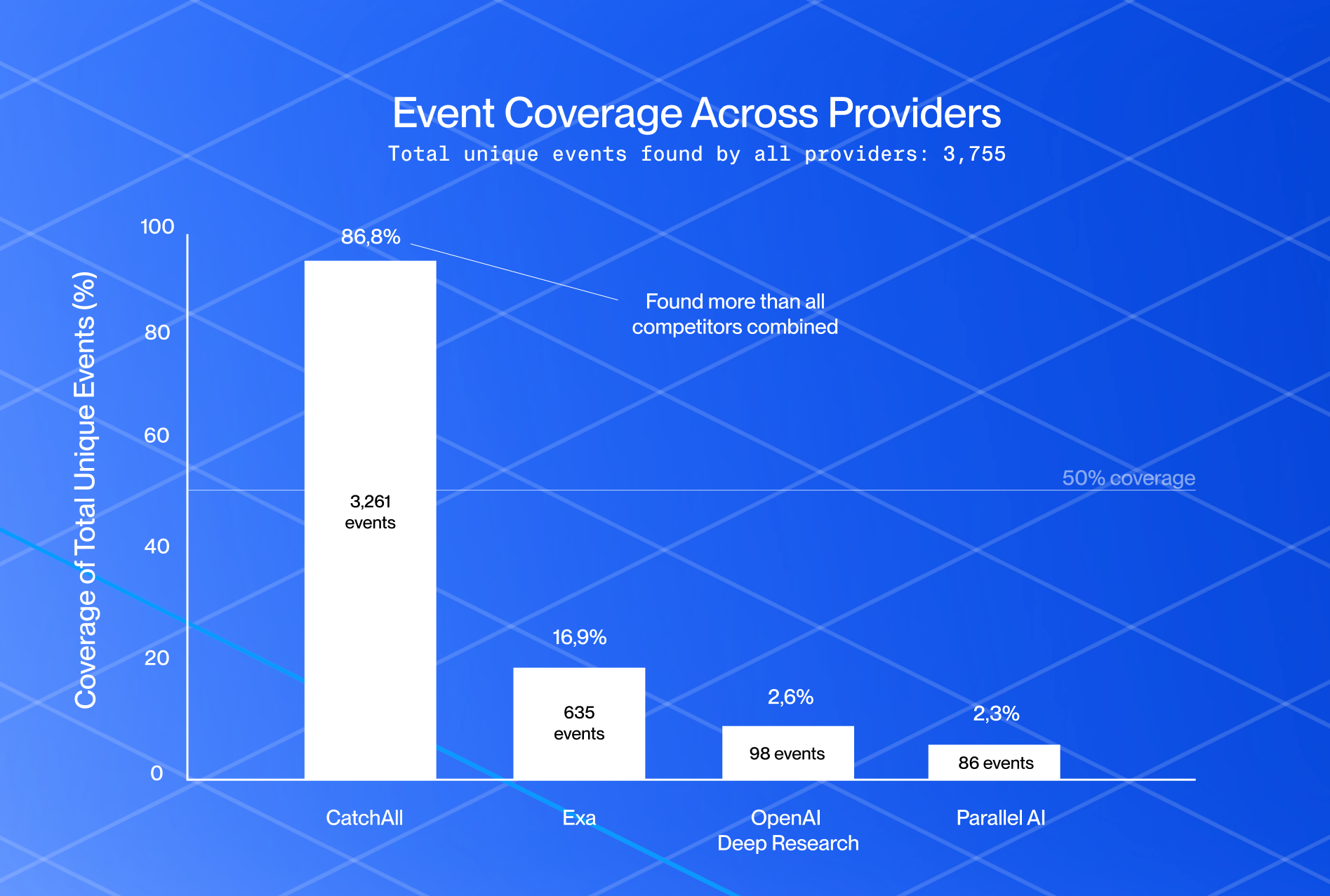

- Recall-first search: Unlike standard consumer engines that bring popular content first, recall-first search finds relevant documents, even from obscure domains. The CatchAll web search API is designed for these deep intelligence tasks where missing a single report could compromise a strategic analysis.

- Filtering and query capabilities: Advanced APIs support complex Boolean operators, proximity searches, and metadata filtering, such as lang:en or source_url:*.gov. Using them, developers can lower compute costs while analysts isolate hyper-specific segments, such as monitoring regulatory changes within particular government domains.

- Scalability and performance: Enterprise-grade APIs handle high concurrency and support thousands of requests per second (RPS) without throughput degradation. This ensures that data-heavy businesses can scale their operations globally without the API becoming a limitation. Developers benefit from a system that remains stable even during considerable global news surges.

- Integration with AI tools: Many search APIs now include native support for RAG (Retrieval-Augmented Generation) frameworks such as LangChain, giving LLMs access to the internet in real time. This helps build and ground AI agents in verifiable facts to prevent hallucinations. For example, an AI site search tool can use this to answer customer queries with up-to-the-minute product information.

What Are Common Use Cases for Web Search APIs?

Organizations deploy web search APIs to gain a competitive edge through automated monitoring, AI grounding, and massive-scale data collection.

1. Market Intelligence Automation

Companies use web search APIs to programmatically monitor competitors' technical signals and macroeconomic trends. Automated querying of thousands of domains reduces human error and latency compared to manual audits.

For instance, an analyst can track real-time changes in documentation or pricing via specific keywords.

For specialized information, developers can implement CatchAll to capture real-world events like niche industry news or government tenders that traditional search engines often omit.

2. AI and LLM Grounding

Roughly 75% of developers rely on AI tools, but language models still struggle with knowledge cutoffs and hallucinations.

A web search API provides a retrieval layer for RAG architectures, feeding live internet data into the model's context window.

This allows developers to create accurate, context-aware AI agents by grounding responses in current facts.

For instance, a financial AI agent can use the API to pull stock market news from the past hour and ensure investment advice is based on current data.

3. Research Automation Pipelines

Analysts automate the discovery and ingestion of specialized documents using targeted API queries. It replaces manual retrieval, enabling researchers to build custom datasets from millions of unstructured web pages.

The API bulk harvesting supports automatic extraction of critical metadata into databases. And this is essential for hedge funds or legal teams cross-referencing thousands of different sources to validate an investment strategy or legal position.

4. AI Site Search and Custom Website Software

Developers use a web search tool to create internal website search software that aggregates data from corporate blogs and documentation.

Using an API allows teams to implement features like faceted filtering and autocomplete without the cost of a custom crawler.

Developers often use a web search API free tier to test AI site search features before full-scale deployment.

How to Choose the Right Web Search API

Selecting the right web search tool requires balancing completeness with fast engineering. Developers and analysts should use the following technical criteria to evaluate providers for their data pipelines.

- Data Quality vs. Quantity: While total volume is a common metric, quality APIs focus on recall (the ability to find the web's long tail, including niche forums and local news often missed by mainstream engines). For example, recall-first APIs like CatchAll are essential for deep intelligence projects where an overlooked data point could invalidate a research model.

- Ease of Integration: A modern API should offer a fast time-to-first-request through REST documentation, boilerplate examples, and SDKs for Python or Node.js. Quality integration reduces technical debt, letting developers focus on application logic instead of debugging authentication or request wrappers.

- Pricing Considerations: Costs are generally structured around tiered monthly plans, per-result pricing, or per-query models. While a free search engine API is useful for prototyping, production environments require transparent pricing. Organizations should forecast throughput to prevent unexpected billing shocks during peak usage.

- Performance and Rate Limits: Engineering teams must evaluate latency (the time it takes for a request to return) and rate limits (requests per second). Effective APIs prevent 429 (Too Many Requests) errors during data bursts and ensure that live monitoring tools never lose sync with the live web.

- Fit for AI and Analytics Workflows: Make sure the API returns clean JSON to maximize token efficiency in LLM context windows. CatchAll’s output is optimized for AI processes that remove unnecessary information so that models receive relevant text. This helps engineers spend less time on post-processing and increases the accuracy of systems that use retrieval generation.

Summary

A web search API helps researchers and developers scale their data pipelines and ground their AI applications in verifiable facts. Among the growing number of providers, NewsCatcher’s CatchAll stands out with its recall-first approach to ensure no market signal is missed. Organizations that implement these automated workflows are experiencing higher ROI and conversion rates than with traditional search, putting them ahead in the era of automation.

Get started with CatchAll for recall-first web search. If you're benchmarking retrieval tools, here's our latest recall benchmark report.

Documentation: https://www.newscatcherapi.com/docs/web-search-api/get-started/introduction

Questions? Email our us sales@newscatcherapi.com