Compare the best web search APIs in 2026 and find the right tool for your use case, budget, and integration needs.

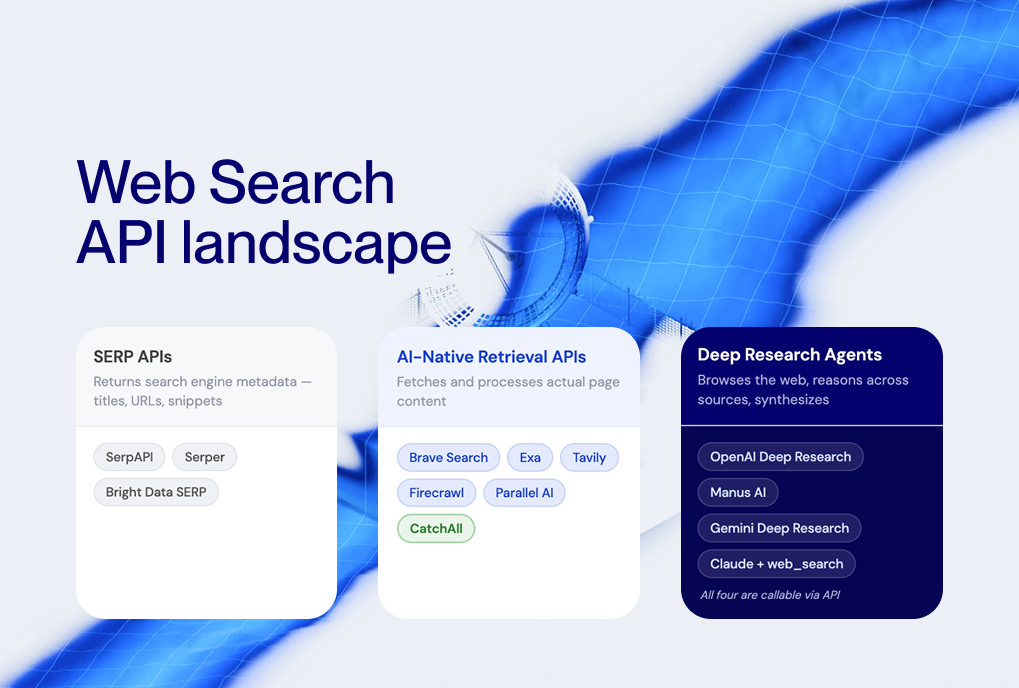

A web search API gives machines programmatic access to web data and lets applications query the web and receive structured results without manual browsing. The web search API category spans from lightweight SERP scrapers to AI-native retrieval pipelines capable of processing tens of thousands of pages per request.

The rise of AI agents, LLM workflows, and automated monitoring systems has created demand for structured web intelligence at machine scale. While standard search engines return top results for human readers, they often fail at technical enumeration tasks that modern data pipelines require, such as tracking every product recall or funding round.

In this guide, we will compare the best web search APIs available with practical guidance on which type fits which use case.

How did we compare the APIs?

To identify the best web search API for a given use case, we evaluate tools across five criteria, including:

- Data freshness: The best web search APIs maintain indices that update with over 100 million page changes daily and ensure news is available within minutes of publication.

- Coverage: High-performance tools must scan billions of pages, including trade publications, local news, and niche industry blogs that global engines might deprioritize.

- Output format: Modern workflows require machine-ready data that strips noise, such as raw HTML, cleaned text, or structured JSON with entity extraction.

- Ease of integration: A tool considered the best web search API with easy integration offers native SDKs and compatibility with frameworks like LangChain.

- Cost efficiency: Evaluation must shift from cost-per-call to cost-per-verified-record, as high-recall tools remove the need for expensive downstream cleaning steps.

Which type of API to use depends on the job:

Best web search APIs in 2026 reviewed

A SERP API, an AI-native retrieval pipeline, and an extraction platform are not competing products — each solves different problems and produces different outputs. Here is the summary table:

1. CatchAll: recall-first web search API for AI

CatchAll is the best web search API for teams that need complete event coverage rather than ranked results. It's an AI-native web search API built around a single design principle: recall first.

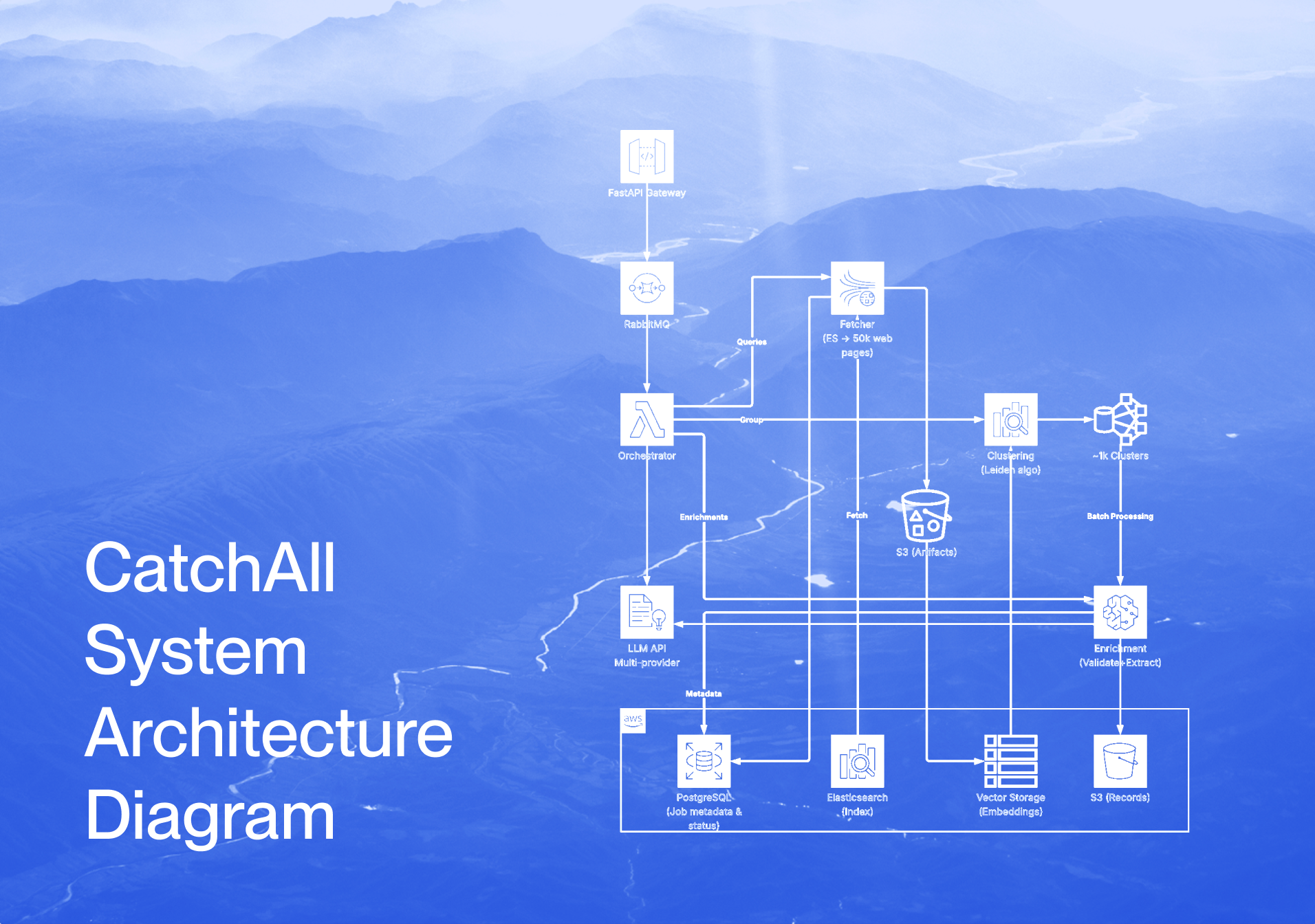

CatchAll processes 50,000+ candidate pages per query through a five-stage pipeline: analyze, fetch, cluster, validate, and extract, and returns structured, deduplicated JSON records validated against your query criteria.

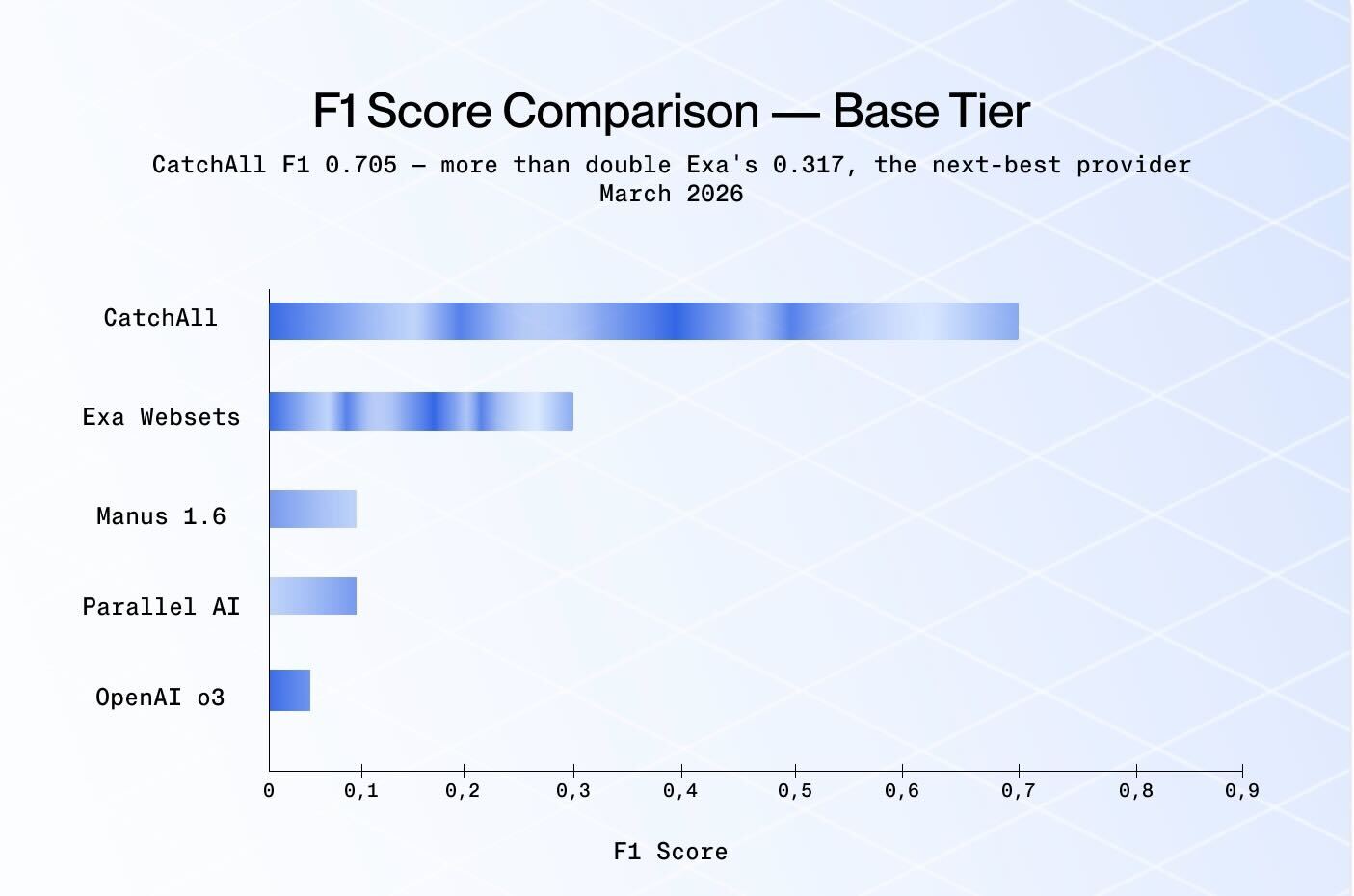

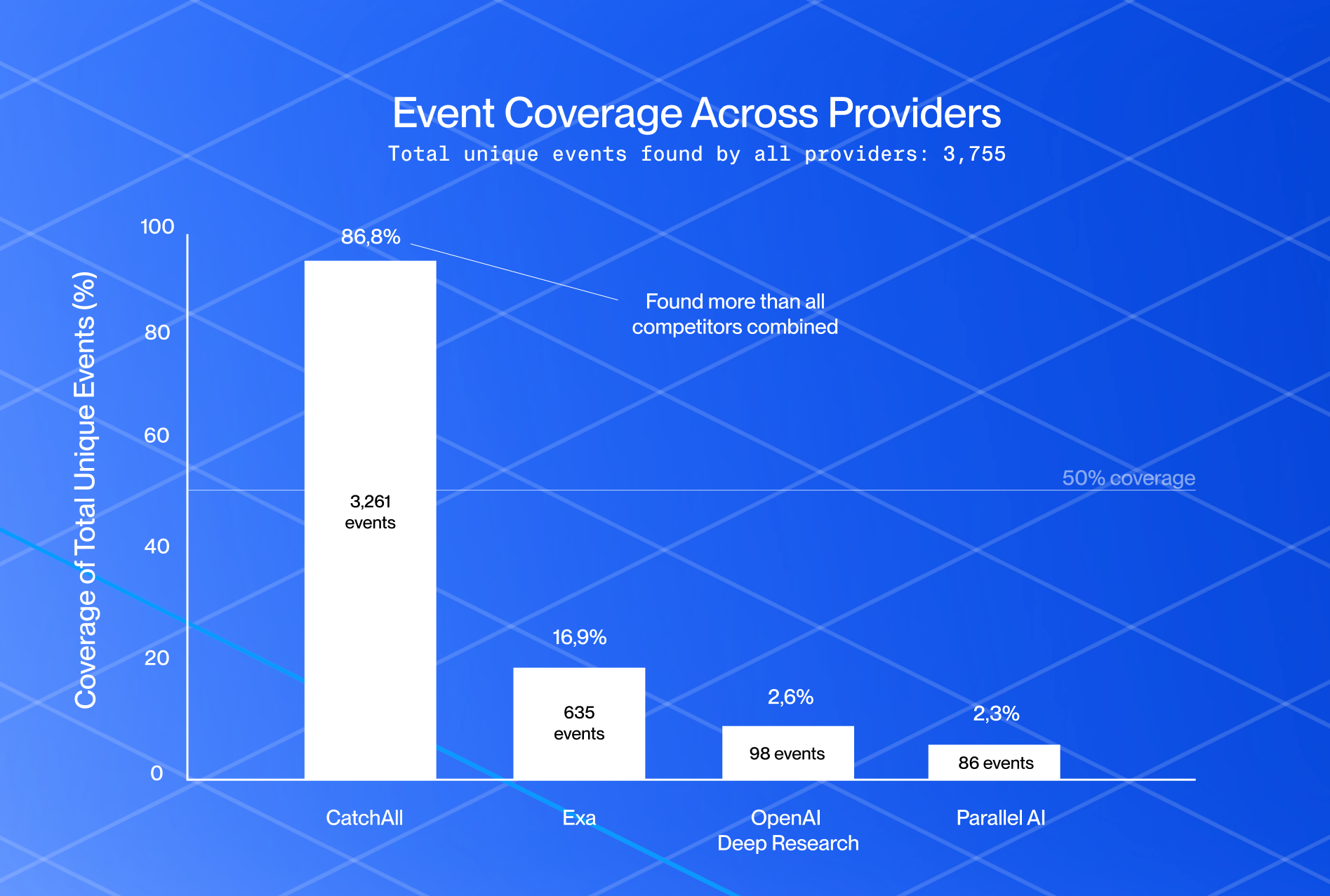

In NewsCatcher's Q1 2026 benchmark across 32 event-detection queries, CatchAll achieved an F1 score of 0.705, more than 2× that of the nearest competitor, Exa, at 0.317.

When a user submits a natural-language query. CatchAll analyzes it, fetches and clusters relevant pages from its 2B+ page index, and validates each cluster with an LLM. Then it returns enriched records with extracted entities, source citations, and publication dates.

That makes CatchAll the best web search API with easy integration for data-intensive pipelines, there's no extraction logic to write and no deduplication to maintain.

- Pricing: The Free tier offers 2,000 credits; the Starter plan includes 6,000 credits for $50/month; and the Scale plan costs $500/month with 60,000 credits.

- Best for: Compliance and regulatory monitoring, mergers & acquisitions (M&A), funding tracking, risk intelligence feeds, AI agent data layers, market intelligence pipelines. Any use case where missing events have real consequences.

Start Building with CatchAll. Structured data, real-time coverage, and one-line integration — everything your AI pipeline needs, none of the extraction overhead. Get started for free.

2. SerpAPI

SerpAPI provides structured JSON access to 70+ search engines and marketplaces, including Google, Bing, DuckDuckGo, Yahoo, Amazon, eBay, Walmart, and YouTube. It also covers vertical engines for flights, hotels, jobs, shopping, and news.

It's one of the most comprehensive multi-engine SERP options available. Responses cover the full SERP payload, organic results, ads, knowledge panels, People Also Ask boxes, and other features, but page content is not included.

- Pricing: Free tier offers 250 searches per month, with paid plans starting at $25 monthly for 1,000 searches.

- Best for: Developers building rank tracking tools, technical SEO teams, and multi-engine marketplace monitoring.

3. Zenserp

Zenserp is a Google SERP API that returns structured JSON results for web, image, news, and shopping searches via a simple REST endpoint. It supports localization and language parameters, making it easy to run geo-targeted SERP queries without managing proxies or browser infrastructure.

- Pricing: Free tier includes 50 searches; paid plans start at $49.99 for 25,000 searches.

- Best for: Fast, scalable Google SERP data collection, localization testing, SEO monitoring pipelines that need clean JSON without infrastructure overhead.

4. Firecrawl

Firecrawl is a web-crawling and scraping API that converts any website into clean, structured data in Markdown, JSON, or HTML. Unlike retrieval APIs that discover pages from a query, Firecrawl works on URLs you already have. It also offers a Search endpoint that can return and scrape results in one step, making it a practical choice for the best web crawling API for web search in LLMs use case, turning raw pages into clean AI-ready formats without custom parsers.

- Pricing: Free tier (500 pages/month); Standard plan is $99/month for 100,000 pages.

- Best for: Turning websites into AI-ready datasets, building RAG grounding layers, and autonomous research tasks.

5. Diffbot

Diffbot uses computer vision and machine learning to extract structured data from web pages, without requiring custom extraction rules. Its Knowledge Graph indexes data from billions of pages and surfaces entity-level facts (companies, people, products, articles) as structured JSON. It is designed for teams that need structured data extraction at scale without writing per-site parsers.

- Pricing: Starts at $299/month for the Enhance API; enterprise pricing for Knowledge Graph access.

- Best for: Automated structured data extraction from arbitrary web pages, knowledge graph queries, and building entity databases without custom parsers.

6. Tavily

Tavily is a web search API purpose-built for RAG and LLM applications, covering web search, content extraction, crawling, and site mapping. It retrieves and processes web content with a focus on returning the most relevant results for AI agent and chatbot use cases. Tavily is not designed for enumeration tasks where the goal is to find every matching event.

- Pricing: Free up to 1,000 searches/month; paid plans start at $30/month.

- Best for: RAG pipelines, conversational AI grounding, single-question answering, and AI agent workflows where top results are sufficient.

7. Exa

Exa is an AI-native retrieval API using neural search to find semantically similar content. Exa Websets, its core product for bounded retrieval, is well-suited to precision-first queries, small, well-bounded event universes (fewer than ~150 events globally), where you want a highly filtered set rather than everything.

In the Q1 2026 benchmark, Exa achieved an F1 of 0.317 versus CatchAll's 0.705, a difference that reflects design intent rather than a quality failure. When asking which web search API offers the best results for precision-first semantic retrieval on bounded queries, Exa is a strong option. It costs per verified true positive is $0.290.

- Pricing: Usage-based, with 1,000 requests per month for free; Search costs $7 per 1,000 requests.

- Best for: Semantic search, small bounded event universes (<150 events globally), RAG applications where precision matters more than exhaustive recall.

8. Parallel AI

Parallel AI is an AI-native retrieval API that performed competitively on hyper-local and entity-specific queries in the Q1 2026 benchmark, outperforming CatchAll on geographically narrow queries while CatchAll led on broad global and cross-sector queries. Parallel AI's cost per verified true positive is $0.440. It's a practical option for teams running high-frequency monitoring on a budget, with queries geographically scoped.

- Pricing: Search is $5 per 1,000 requests (includes 10 results); Task API scales from $5 to $2,400 per 1k requests.

- Best for: Hyper-local event queries, entity-specific monitoring, high-frequency monitoring on a budget.

9. Apify

Apify is the go-to platform when you need the best web search API for web scraping with full control over how data is collected. It's a cloud-based web scraping and automation platform that lets teams build, deploy, and run custom extraction actors.

It also provides a marketplace of ready-made actors for common sources, including Google Search, Amazon, LinkedIn, and social platforms. Apify gives you direct access to live page content, with built-in configurable extraction logic, scheduling, and proxy rotation.

- Pricing: Free tier with $5 in credit; Starter plan costs $29 monthly.

- Best for: Recurring site-specific data collection, multi-source pipelines, and teams requiring total control over scraping logic and scheduling.

How are web search APIs used in AI and data applications?

For AI and data applications, the best web search API prioritizes recall and structured extraction over simple link ranking.

- AI agents and LLM pipelines require complete, structured data. An agent tasked with "find all funding rounds in European fintech last month" needs a recall-first API, as a SERP API returns links, not records.

- Continuous event and news monitoring requires fresh, deduplicated data that maps directly into a database or alert pipeline. SERP APIs return what search engines surface at query time, not necessarily all events that occurred. For monitoring use cases where completeness matters, the key distinction between a SERP API and a recall-first pipeline is sampling versus coverage.

- Market intelligence and competitive analysis benefit from broad geographic and source coverage. Regional press and non-English publications often carry signals first; APIs that index only top-tier English sources miss them.

- Data pipelines and automation need consistent, machine-readable output. Structured JSON with validated fields significantly reduces downstream processing compared to raw HTML or SERP snippets. This is where the search API for web scraping and retrieval use cases diverges. Scraping platforms like Apify give you source control and custom extraction logic, recall-first retrieval pipelines like CatchAll give you coverage across the open web.

How to choose the right web search API

Choosing the right tool depends on whether you are optimizing for recall (finding everything), precision (finding the best single answer), or SEO analytics (finding rankings).

- Choose an AI-native recall-first API when you need page content, and you're building a monitoring feed, event dataset, or AI agent data layer. For comprehensive coverage at scale, CatchAll is the go-to option as it offers natural-language query in, validated structured JSON out. For precision-first or semantically bounded queries, Exa. For hyper-local queries on a budget, Parallel AI. For RAG and single-question answering, Tavily.

- Choose a SERP API (SerpAPI, Zenserp) when your use case is rank tracking, SERP feature analysis, or URL discovery as a first step in a pipeline you've already built.

- Choose an extraction platform (Apify, Firecrawl, Diffbot) when you have a known source list and need full control over how data is extracted. Use them when you need to turn arbitrary web pages into structured records without custom parsing rules.

- On cost: Don't compare per-call pricing across categories. In the Q1 2026 benchmark, CatchAll's cost per verified true positive was $0.185, compared to $0.290 for Exa and $0.440 for Parallel AI.

FAQs

What are the best web search APIs with easy integration?

For SEO data, Serper and Zenserp offer low-friction REST APIs with generous free tiers. For AI data pipelines, CatchAll is the best web search API with easy integration because it accepts natural-language queries and returns validated JSON, removing the need for manual extraction logic.

What are the key use cases for web search APIs?

Web search APIs are used for SEO monitoring and rank tracking (SERP APIs), AI agent data grounding (recall-first retrieval), continuous event and news monitoring (AI-native retrieval), market intelligence and competitive analysis (SERP or AI-native), and web crawling from known URLs (Firecrawl).

Which web search api offers the best results?

It depends on the definition of best. For recall (finding all events), CatchAll achieved an F1 score of 0.705 in the Q1 2026 benchmark across 32 queries, more than 2× that of the nearest competitor. For multi-engine SERP features, 70+ APIs. For semantic precision, Exa is optimized for high-quality, meaning-based discovery. See the Q1 2026 benchmark for full results.

Further Reading: