CatchAll F1 moved from 0.527 to 0.705 in Q1 2026 — winning 27 of 32 queries against Exa (0.317), Parallel AI, Manus, and OpenAI. Fairer evaluation pipeline, new precision filter, full cost breakdown, and where competitors still win.

In January, we published a web search API benchmark showing CatchAll finds roughly 5× more relevant events than the closest competitor — and committed to re-running it every quarter. We ran it again in March, against a larger field, with a stricter evaluation pipeline. Precision went from 0.437 to 0.632. F1 went from 0.527 to 0.705.

This post covers what we measured, what changed, and where we still lose.

TL;DR

- 32 queries, 5 providers in Base mode, 4 in Lite mode; Manus AI added as a new competitor

- CatchAll Base leads the field: F1 0.705 vs Exa's next-best 0.317, winning 27 of 32 queries

- Quarter-over-quarter: F1 0.527 → 0.705, Precision 0.437 → 0.632, Recall 79.8%

- We shipped a precision filter for Base mode — it removes irrelevant records before delivery, with a real but acceptable recall cost

- New: CatchAll Lite, benchmarked at $1/query — F1 0.512 within its tier, but a genuinely more competitive field

- Competitor evaluation is now more accurate: forced true positives dropped from 40–70% in January to 6–17% in March

Why we ran it again

The January post committed to quarterly reruns, honest reporting of regressions, and raw data on request. This is the first rerun.

Three things changed.

The evaluation pipeline got fairer. In January, URLs we couldn't fetch were counted as true positives rather than penalized for something outside the competitor's control. That meant 40–70% of some competitors' TPs were assumed relevant, never actually checked. We now retrieve content from ~94% of competitor URLs. Forced true positives for Exa dropped to 6.4%; for Parallel AI, to 16.8%. The scores here are substantially more trustworthy.

We shipped a precision filter. After enrichment, each record is now checked against the original query before delivery — irrelevant results are dropped. Precision improved 44.6% to 0.632; recall fell 8.1% to 0.798. F1 improved 33.8% to 0.705.

We expanded the scope. Manus AI joins the benchmark, the query set was refreshed, and CatchAll is now tested in two modes: Base and Lite.

For the full methodology, the January post has the complete picture.

Benchmark setup

32 queries, March 2026

The query structure is the same as January: time-bounded event detection across eight categories — funding, labor, regulatory, accidents, real estate, government grants, tech outages, and data center expansions. The 5 new queries push into harder territory: three ask about Chinese government policy signaling (broad, geopolitical, 24-day windows) and two are hyper-local (immigration enforcement in a single US state; business openings in a specific Connecticut county). We included them deliberately to test edge cases where we expected to underperform.

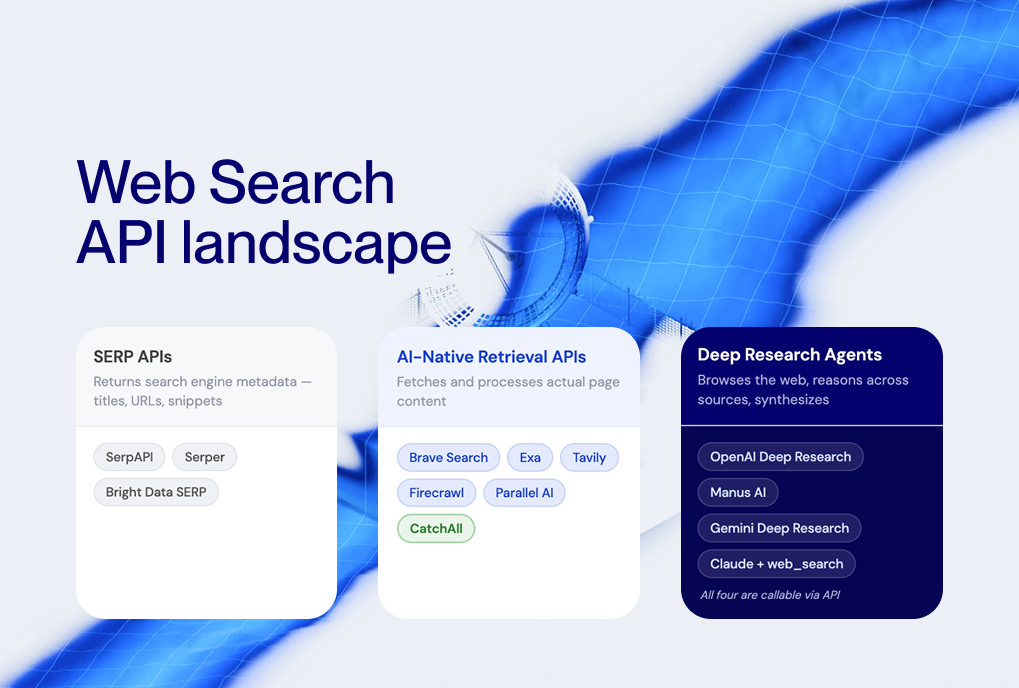

Two tiers

This benchmark covers two different CatchAll operating modes, which we’re calling Base and Lite. They’re not just the same product at different price points — they work differently.

Base runs deep. It processes thousands of candidate web pages per query, enriches each record with structured extraction (company names, event types, affected counts, and so on), and returns fully validated records. Pricing is $0.10 per returned record.

Lite runs shallow. It processes ~400 candidates per query, validates each on a binary yes/no relevance check, and returns event titles plus citation links — no enrichment fields. Pricing is $1.00 per query, flat.

The competitor set also differs between tiers. Exa Websets is a Base-tier product and doesn’t appear in Lite. The Lite field is: CatchAll Lite, Parallel AI (Base generator), Manus 1.6 Lite, and OpenAI o4-mini-deep-research.

One important note before the numbers: Lite is intersected but is not a full subset of Base, so they are not directly comparable in terms of recall. The two experiments have different provider sets and therefore different observable universes — 6,025 unique true positives in Base, 860 in Lite. We haven’t run cross-tier deduplication, so we can’t say precisely how much overlap exists between a Lite true positive and a Base one. We report within-tier recall only.

Metrics

Primary metric: F1 score, the harmonic mean of precision and recall. We compute it as a weighted aggregate, so large queries count proportionally more than small ones.

Observable recall is bounded by what all providers found combined, not by all events that actually occurred. If 500 acquisitions happened globally, but every tool together found 329, recall is computed against 329. This limitation applies equally to all providers, so relative comparisons remain meaningful.

Base mode results

CatchAll leads on F1 (0.705), recall (79.8%), and total events found (4,807). Exa leads on precision (0.837). Parallel AI Core and Manus 1.6 cluster around F1 0.103–0.104. OpenAI o3-deep-research at 0.017 is well below all others.

The precision-recall tradeoff

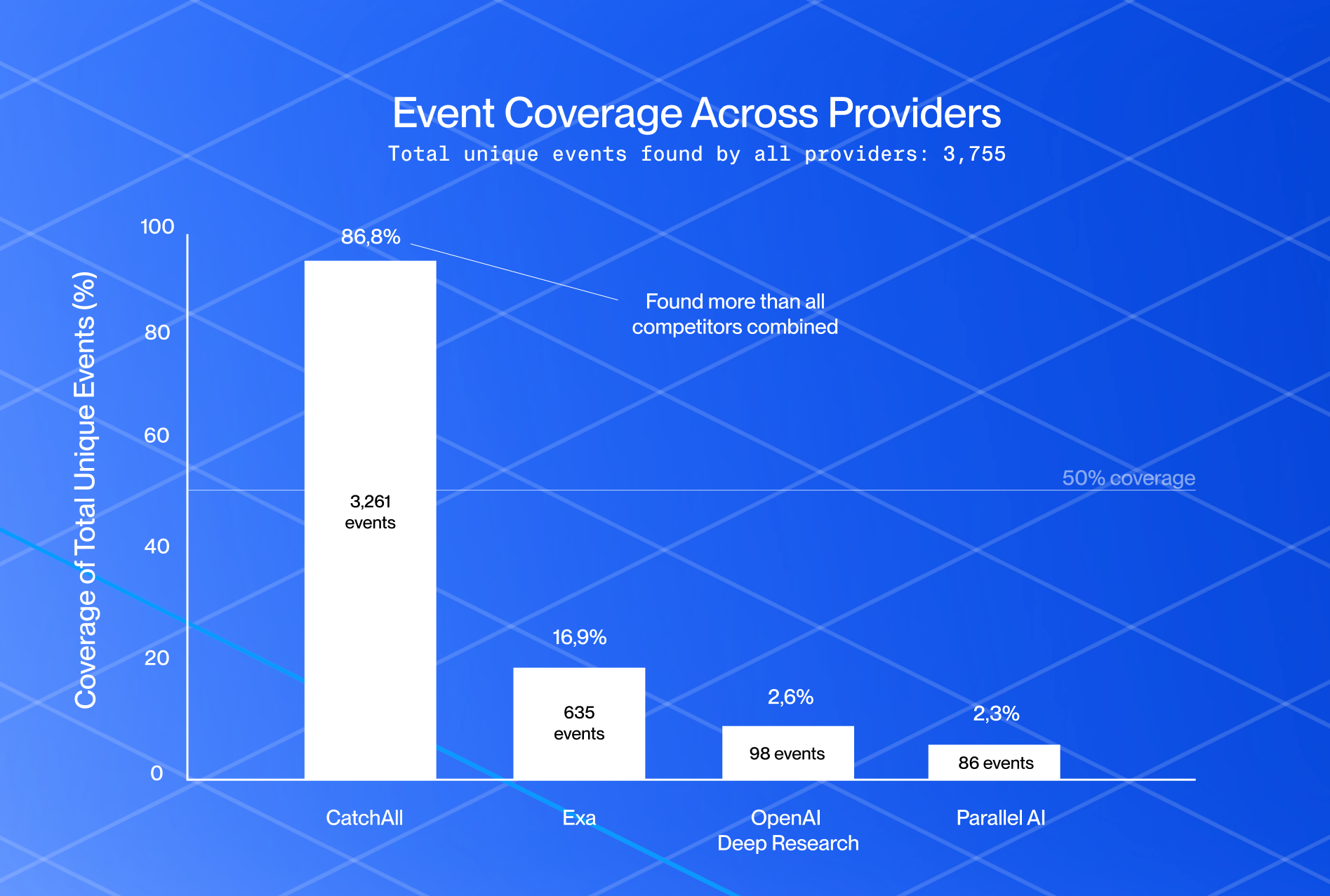

The precision filter that shipped in March improved precision from 0.437 to 0.632 — a 44.6% gain. Recall came in at 79.8%, down from 86.8% in January. That tradeoff is intentional — here's the case for why recall matters more than precision.

Both things are true, and both need saying: the filter genuinely improved signal quality, and it did cost some recall. Any filter that removes false positives will remove some true positives too — that’s unavoidable. The two experiments also aren’t a clean before/after: different queries, different timeframe, different competitors. So the most defensible cross-run statement is the F1 score, which moved from 0.527 to 0.705, accounting for both sides of the tradeoff.

Q4 2025 vs Q1 2026: CatchAll head-to-head

Coverage

CatchAll alone covers 79.8% of the observable universe across 32 queries. The remaining 20% is distributed across competitors: Exa found 844 unique events that CatchAll missed (14.0% of the universe), Parallel AI found 242 (4.0%), Manus 206 (3.4%), and OpenAI 53 (0.9%).

Running CatchAll alongside Exa covers roughly 94% of the observable universe. Whether that marginal 14% is worth the additional cost depends entirely on your use case.

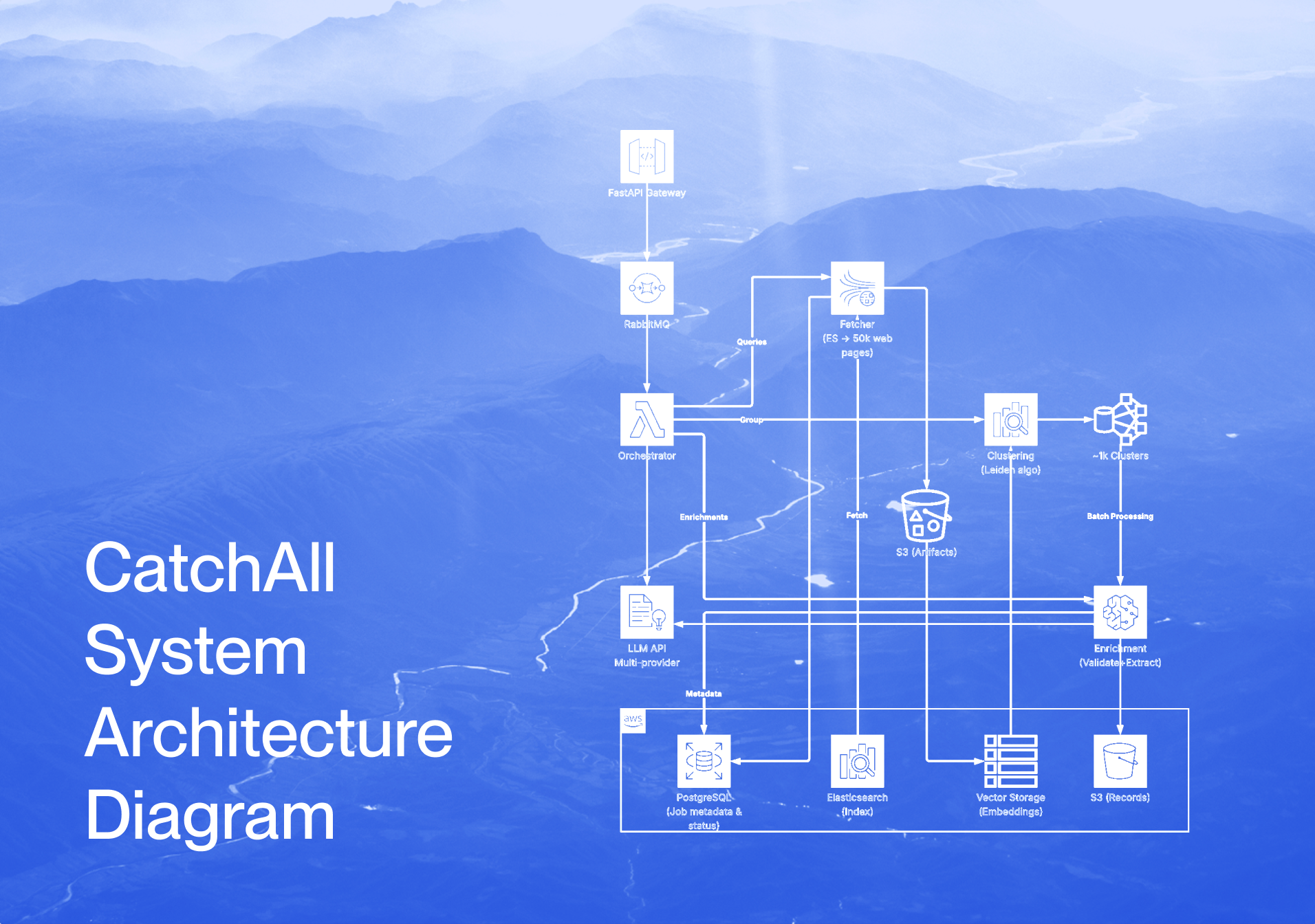

For a look at the pipeline architecture behind this coverage — Leiden clustering, meta-prompting, 50k pages per query — see how we built the recall-first pipeline.

Cost efficiency

CatchAll costs $0.185 per verified relevant event ($889.50 total; 8,895 raw records at $0.10 each). Exa costs $0.290/TP. Parallel AI Core $0.440/TP. Manus 1.6 $0.774/TP. OpenAI $0.854/TP.

CatchAll’s higher absolute cost ($890 vs OpenAI’s $45) reflects scale: it finds 91x more verified relevant events.

Where CatchAll wins — and where it doesn’t

CatchAll leads most categories and wins 27 of 32 queries on F1. The strongest categories are Funding & Financial (F1 0.819), China Policy (0.802), and Labor & Employment (0.745). These are high-volume, broad-scope queries across multiple geographies — exactly the use case the architecture is built for.

Three queries go to Exa: Taiwan workplace accidents (Exa 0.894 vs CatchAll 0.400), Texas workplace accidents (0.660 vs 0.472), and malls opened globally (0.438 vs 0.274). The pattern is consistent: when the true event universe is small — 47 to 137 events — Exa’s precision-first approach finds the core set cleanly. CatchAll’s broader scan picks up noise that overwhelms the small signal.

Two queries go to Parallel AI: New Mexico immigration enforcement (0.609 vs CatchAll 0.242) and business openings in Fairfield County, Connecticut (0.585 vs 0.194). These are hyper-local entity-specific queries with around 26–27 events in the universe. At this scale, an entity-search tool with tight geographic matching outperforms a coverage-first approach.

Lite mode results

CatchAll Lite leads on F1 (0.512) and total events found (458 TPs). But the Lite tier is more competitive: Parallel AI’s Base generator wins 12 of 32 queries to CatchAll’s 11, and Manus 1.6 Lite wins 7. This is a meaningfully different picture from Base mode, where CatchAll wins 27 of 32.

Parallel AI leads in several categories where it struggles in Base: China policy queries (F1 0.657 vs CatchAll Lite’s 0.549), data center expansions (0.508 vs 0.333), and niche geographic queries (0.597 vs 0.185). For these query types at the Lite-tier budget, it’s worth evaluating.

Manus 1.6 Lite has the highest precision in the Lite tier (0.769), but carries a caveat: its forced true positive rate is 18.2%, the highest of any provider in either tier. That precision figure is optimistic.

Base vs Lite: CatchAll head-to-head

CatchAll Lite costs $31.00 for 32 queries ($1.00/query flat, with $0 for 1 query returned zero records) and finds 458 verified, relevant events at $0.068/TP. CatchAll Base costs $889.50 and finds 4,807 events at $0.185/TP. Lite is 2.6x cheaper per relevant event found, but it finds 10.5x fewer of them, and there is no data extraction.

The Parallel AI reversal

One finding worth flagging: Parallel AI’s Base generator — used in the Lite tier — achieves F1 0.406, while their Core generator — used in the Base tier — achieves F1 0.103. The cheaper mode outperforms the expensive one on this benchmark by a wide margin. We don’t have an explanation for this, but anyone evaluating Parallel AI should be aware of it.

Choosing the right web search API

CatchAll Base is the right choice when comprehensive coverage matters — global event monitoring, M&A databases, regulatory tracking, and incident feeds. It wins on F1 across most query categories and finds more relevant events per dollar than any other tool in this field.

CatchAll Lite makes sense when cost is the primary constraint and moderate coverage is sufficient — daily signal sweeps, early-stage triage, high-frequency queries where you investigate further before acting.

Exa Websets outperforms CatchAll in queries with small, well-bounded event universes (under ~150 events globally). Its precision-first approach shines when the signal is narrow.

Parallel AI (Base generator in Lite, Core in Base) performs well on entity-specific and hyper-local queries — particularly the two queries in this benchmark with fewer than 30 events in the universe. Note the intra-product reversal described above before choosing a tier.

OpenAI Deep Research underperformed on this benchmark (F1 0.017 in Base and 0.109 in Lite). It's better suited to synthesizing narratives than exhaustive event detection.

Methodology notes

Recall is relative, not absolute. All recall figures are computed against the observable universe — events found by at least one provider. True absolute recall is lower for everyone and not measurable.

Cross-tier recall is not reported. Base and Lite are standalone experiments with separate provider sets and distinct observable universes. We haven't cross-verified the two runs; Lite figures are within-tier only.

Parallel AI forced TPs (16.8% in Base). Down substantially from January's ~40–70%, but the highest remaining residual in Base. Their precision of 0.777 is somewhat optimistic as a result.

Manus Lite forced TPs (18.2%). Same caveat for Manus Lite's precision of 0.769.

The Q4 2025 vs Q1 2026 comparison isn't a controlled experiment. They used slightly different queries, different timeframes, and different competitor sets. F1 is the most defensible cross-run metric because it accounts for both precision and recall, but treat the comparison as directionally informative rather than a rigorous A/B test.

Gemini and Claude aren't in the Q1 2026 benchmark but are accessible via code. Gemini uses Google Gen AI SDK with a polling pattern; its Interactions API is in preview, lacks a cancel endpoint, and has unpredictable runtimes. Claude has no dedicated deep research model via the API, but using the Anthropic Messages API with a web_search tool shows comparable performance. Both are considered for the Q2 benchmark.

What’s next

We run these benchmarks quarterly. The next run will cover an expanded query set and updated provider configurations. We’ll publish the results whether they go up or down.

Raw data and the full query list are available on request. If you spot a flaw in the methodology, reach out — we’d rather fix it than defend it.

Both modes are available now. Start with 2,000 free credits at platform.newscatcherapi.com — that’s 20 Lite queries or enough to explore Base across several topics.

Questions: support@newscatcherapi.com.

Evaluation period: March 1–7, 2026 (extended to March 1–24 for 5 queries). For December 2025 results and full methodology, see our January benchmark post.